目录

- 1. 上一节回顾

- 2. 数据集划分

- 3. 完整代码

1. 上一节回顾

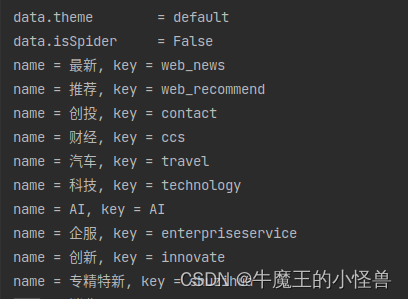

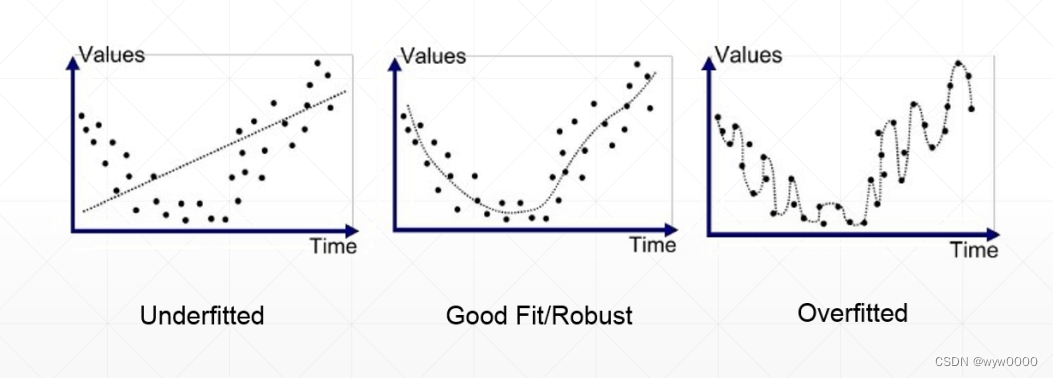

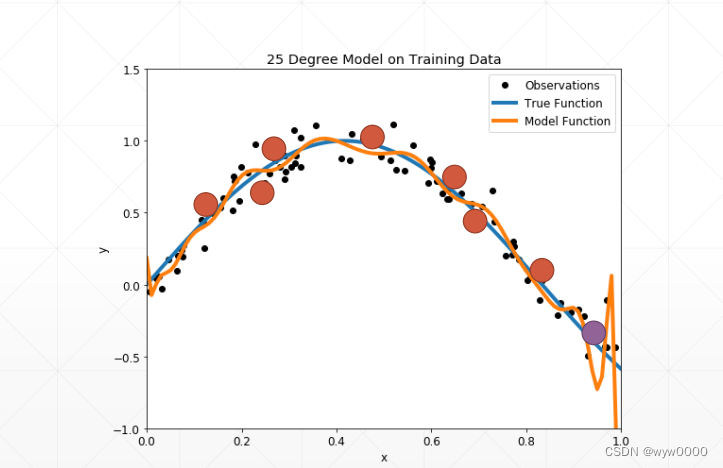

下列图中三种曲线分别代表了欠拟合、好的拟合和过拟合

下图为过拟合曲线,那么如何来检测过拟合呢?将数据集划分为train和val(validation)val是用来测试训练过程是否过拟合的。

2. 数据集划分

数据集一般划分为train、val(当划分为2个数据集时,val又被称为test)、test

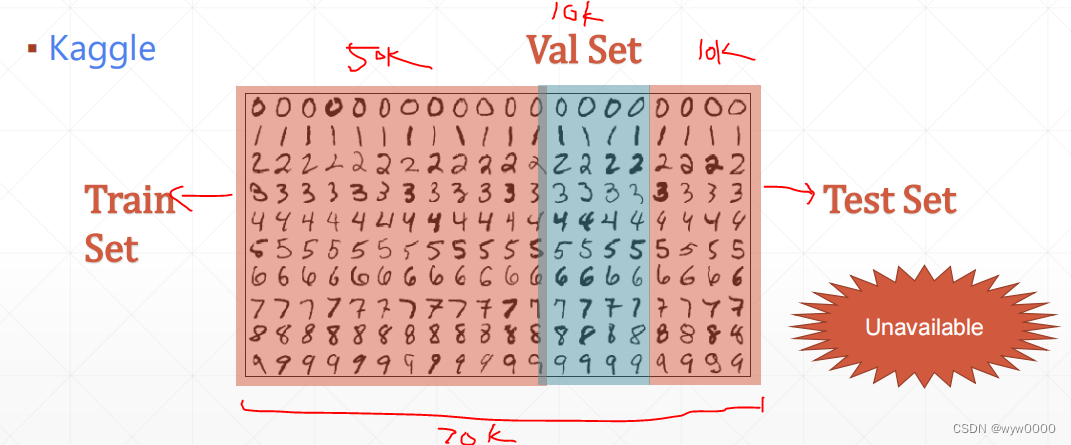

以MINIST数据集为例,下图中将数据集化为为train和test(val)

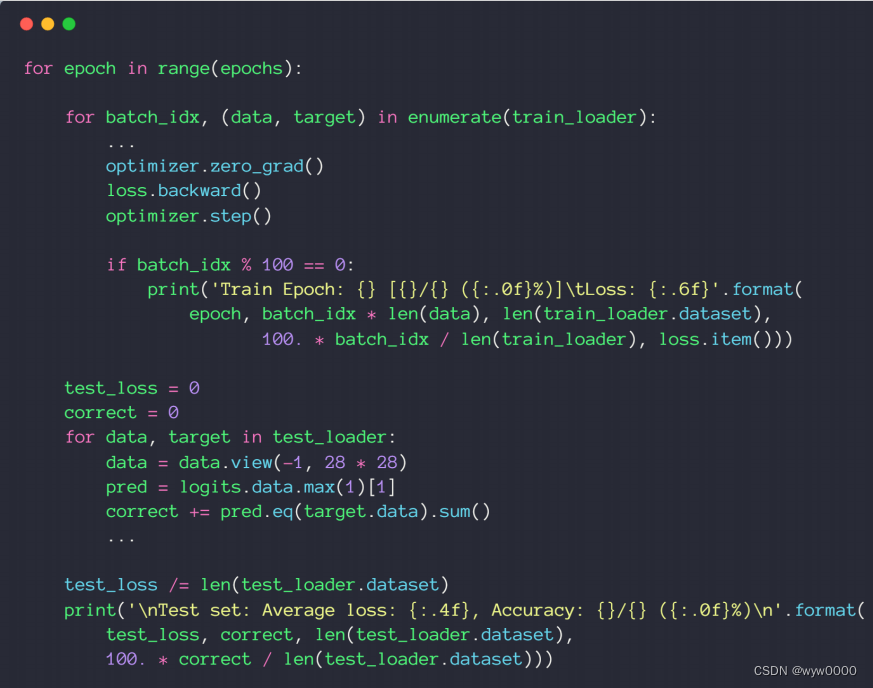

下图中每train一个epoch就进行依次test看是否发生了过拟合,并保存当时的checkpoint,训练完成后选择性能最好的checkpoints即可。

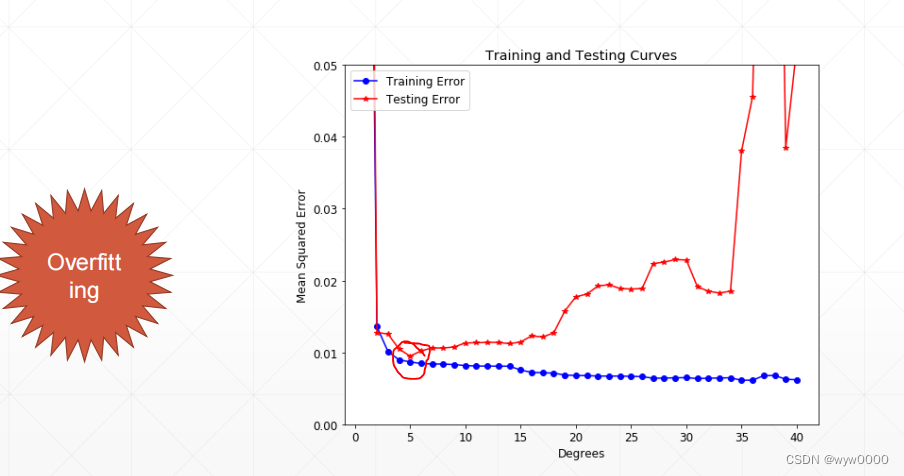

下图中在标记点之后train的loss变换不明显,而test loss却升高了,这就是发生了overfitting过拟合。

如下图:假如又70k数据train50k,val10k,test10k,那么为什么要又test数据集呢?作用是什么呢?test数据集是用来验证模型的性能的,不能用于train和val否则会造成数据污染,也可称为作弊。

pytorch只能划分train和test,即通过train_db = datasets.MNIST('../data', train=True, download=True, 中的train=true即为train数据集,否则为test数据集 ,那么剩下的train我们要人为划分为train和val。

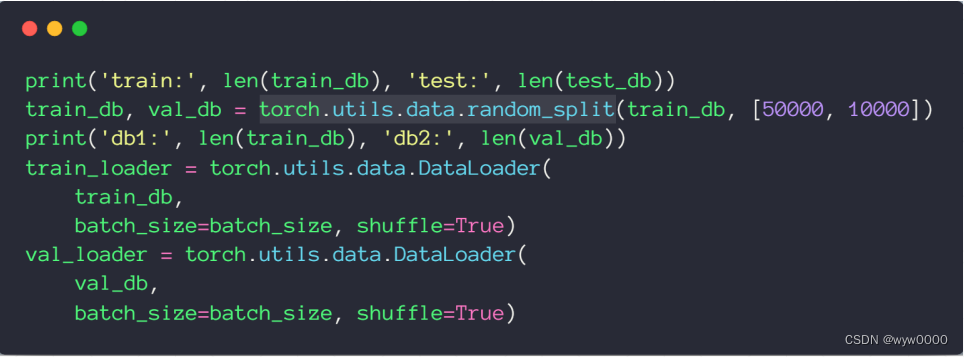

如下图所示:通过train_db, val_db = torch.utils.data.random_split(train_db, [50000, 10000]) 实现把train划分为train50k,val10k

总结:train数据用来训练,val数据用来检测训练是否过拟合的,test数据集是用来验证模型性能的

3. 完整代码

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transformsbatch_size=200

learning_rate=0.01

epochs=10train_db = datasets.MNIST('../data', train=True, download=True,transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.1307,), (0.3081,))]))

train_loader = torch.utils.data.DataLoader(train_db,batch_size=batch_size, shuffle=True)test_db = datasets.MNIST('../data', train=False, transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.1307,), (0.3081,))

]))

test_loader = torch.utils.data.DataLoader(test_db,batch_size=batch_size, shuffle=True)print('train:', len(train_db), 'test:', len(test_db))

train_db, val_db = torch.utils.data.random_split(train_db, [50000, 10000])

print('db1:', len(train_db), 'db2:', len(val_db))

train_loader = torch.utils.data.DataLoader(train_db,batch_size=batch_size, shuffle=True)

val_loader = torch.utils.data.DataLoader(val_db,batch_size=batch_size, shuffle=True)class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.model = nn.Sequential(nn.Linear(784, 200),nn.LeakyReLU(inplace=True),nn.Linear(200, 200),nn.LeakyReLU(inplace=True),nn.Linear(200, 10),nn.LeakyReLU(inplace=True),)def forward(self, x):x = self.model(x)return xdevice = torch.device('cuda:0')

net = MLP().to(device)

optimizer = optim.SGD(net.parameters(), lr=learning_rate)

criteon = nn.CrossEntropyLoss().to(device)for epoch in range(epochs):for batch_idx, (data, target) in enumerate(train_loader):data = data.view(-1, 28*28)data, target = data.to(device), target.cuda()logits = net(data)loss = criteon(logits, target)optimizer.zero_grad()loss.backward()# print(w1.grad.norm(), w2.grad.norm())optimizer.step()if batch_idx % 100 == 0:print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(epoch, batch_idx * len(data), len(train_loader.dataset),100. * batch_idx / len(train_loader), loss.item()))test_loss = 0correct = 0for data, target in val_loader:data = data.view(-1, 28 * 28)data, target = data.to(device), target.cuda()logits = net(data)test_loss += criteon(logits, target).item()pred = logits.data.max(1)[1]correct += pred.eq(target.data).sum()test_loss /= len(val_loader.dataset)print('\nVAL set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(test_loss, correct, len(val_loader.dataset),100. * correct / len(val_loader.dataset)))test_loss = 0

correct = 0

for data, target in test_loader:data = data.view(-1, 28 * 28)data, target = data.to(device), target.cuda()logits = net(data)test_loss += criteon(logits, target).item()pred = logits.data.max(1)[1]correct += pred.eq(target.data).sum()test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(test_loss, correct, len(test_loader.dataset),100. * correct / len(test_loader.dataset)))