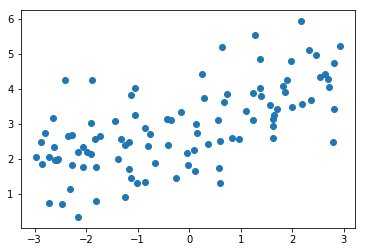

多项式回归

生成数据

import numpy as np

import matplotlib.pyplot as plt

x = np.random.uniform(-3, 3, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x**2 + x + 2 + np.random.normal(0, 1, 100)

plt.scatter(x, y)

plt.show()

线性回归拟合数据集

from sklearn.linear_model import LinearRegressionlin_reg = LinearRegression()

lin_reg.fit(X, y)

y_predict = lin_reg.predict(X)

plt.scatter(x, y)

plt.plot(x, y_predict, color='r')

plt.show()

效果不好 添加一个特征

X2 = np.hstack([X, X**2])

lin_reg2 = LinearRegression()

lin_reg2.fit(X2, y)

y_predict2 = lin_reg2.predict(X2)

plt.scatter(x, y)

plt.plot(np.sort(x), y_predict2[np.argsort(x)], color='r')

plt.show()

scikit-learn中的多项式回归和Pipeline

生成数据集

import numpy as np

import matplotlib.pyplot as plt

x = np.random.uniform(-3, 3, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x**2 + x + 2 + np.random.normal(0, 1, 100)

数据预处理 添加新特征

from sklearn.preprocessing import PolynomialFeatures

poly = PolynomialFeatures(degree=2)

poly.fit(X)

X2 = poly.transform(X)

训练

from sklearn.linear_model import LinearRegressionlin_reg2 = LinearRegression()

lin_reg2.fit(X2, y)

y_predict2 = lin_reg2.predict(X2)

plt.scatter(x, y)

plt.plot(np.sort(x), y_predict2[np.argsort(x)], color='r')

plt.show()

关于PolynomialFeatures

X = np.arange(1, 11).reshape(-1, 2)

poly = PolynomialFeatures(degree=2)

poly.fit(X)

X2 = poly.transform(X)

X2

0次幂、1次幂 2列 、2次幂3列 (1列*2列)

Pipeline

x = np.random.uniform(-3, 3, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x**2 + x + 2 + np.random.normal(0, 1, 100)from sklearn.pipeline import Pipeline

from sklearn.preprocessing import StandardScalerpoly_reg = Pipeline([("poly", PolynomialFeatures(degree=2)),("std_scaler", StandardScaler()),("lin_reg", LinearRegression())

])

传入poly_reg的数据 会依次执行管道的内容

三步合成一步

poly_reg.fit(X, y)

y_predict = poly_reg.predict(X)

plt.scatter(x, y)

plt.plot(np.sort(x), y_predict[np.argsort(x)], color='r')

plt.show()

过拟合和欠拟合

创建数据集

import numpy as np

import matplotlib.pyplot as plt

np.random.seed(666)

x = np.random.uniform(-3.0, 3.0, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x**2 + x + 2 + np.random.normal(0, 1, size=100)

plt.scatter(x, y)

plt.show()

使用线性回归

from sklearn.linear_model import LinearRegressionlin_reg = LinearRegression()

lin_reg.fit(X, y)

lin_reg.score(X, y)

y_predict = lin_reg.predict(X)

plt.scatter(x, y)

plt.plot(np.sort(x), y_predict[np.argsort(x)], color='r')

plt.show()

欠拟合

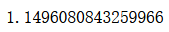

均方误差

from sklearn.metrics import mean_squared_errory_predict = lin_reg.predict(X)

mean_squared_error(y, y_predict)

使用多项式回归

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import PolynomialFeatures

from sklearn.preprocessing import StandardScalerdef PolynomialRegression(degree):return Pipeline([("poly", PolynomialFeatures(degree=degree)),("std_scaler", StandardScaler()),("lin_reg", LinearRegression())])

poly2_reg = PolynomialRegression(degree=2)

poly2_reg.fit(X, y)

预测 查看均方误差

y2_predict = poly2_reg.predict(X)

mean_squared_error(y, y2_predict)

plt.scatter(x, y)

plt.plot(np.sort(x), y2_predict[np.argsort(x)], color='r')

plt.show()

degree=10

poly10_reg = PolynomialRegression(degree=10)

poly10_reg.fit(X, y)y10_predict = poly10_reg.predict(X)

mean_squared_error(y, y10_predict)

plt.scatter(x, y)

plt.plot(np.sort(x), y10_predict[np.argsort(x)], color='r')

plt.show()

degree=100

poly100_reg = PolynomialRegression(degree=100)

poly100_reg.fit(X, y)y100_predict = poly100_reg.predict(X)

mean_squared_error(y, y100_predict)

plt.scatter(x, y)

plt.plot(np.sort(x), y100_predict[np.argsort(x)], color='r')

plt.show()

原始折线

X_plot = np.linspace(-3, 3, 100).reshape(100, 1)

y_plot = poly100_reg.predict(X_plot)

plt.scatter(x, y)

plt.plot(X_plot[:,0], y_plot, color='r')

plt.axis([-3, 3, 0, 10])

plt.show()

过拟合

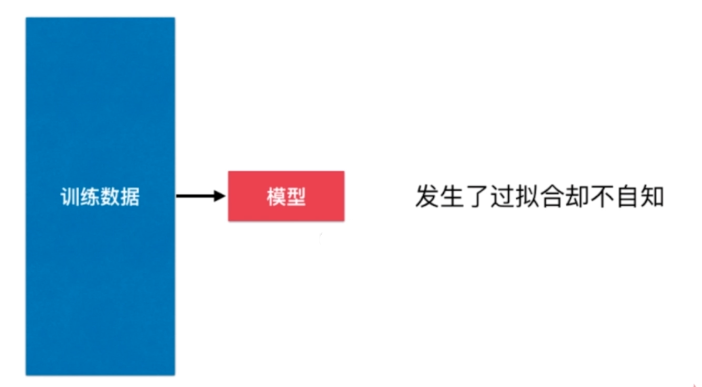

train test split的意义

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=666)

lin_reg.fit(X_train, y_train)

y_predict = lin_reg.predict(X_test)

mean_squared_error(y_test, y_predict)

poly2_reg.fit(X_train, y_train)

y2_predict = poly2_reg.predict(X_test)

mean_squared_error(y_test, y2_predict)

poly10_reg.fit(X_train, y_train)

y10_predict = poly10_reg.predict(X_test)

mean_squared_error(y_test, y10_predict)

poly100_reg.fit(X_train, y_train)

y100_predict = poly100_reg.predict(X_test)

mean_squared_error(y_test, y100_predict)

欠拟合 underfitting

欠拟合 underfitting

算法所训练的模型不能完整表述数据关系

过拟合 overfitting

算法所训练的模型过多地表达了数据间的噪音关系

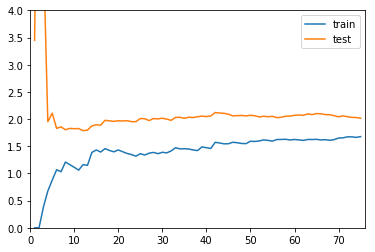

学习曲线

随着训练样本的逐渐增多,算法训练出的模型的表现能力

代码

生成数据集

import numpy as np

import matplotlib.pyplot as plt

np.random.seed(666)

x = np.random.uniform(-3.0, 3.0, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x**2 + x + 2 + np.random.normal(0, 1, size=100)

plt.scatter(x, y)

plt.show()

学习曲线

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=10)

线性模型

from sklearn.linear_model import LinearRegression

from sklearn.metrics import mean_squared_errortrain_score = []

test_score = []

#循环 训练数据逐渐增加

for i in range(1, 76):lin_reg = LinearRegression()lin_reg.fit(X_train[:i], y_train[:i])y_train_predict = lin_reg.predict(X_train[:i])train_score.append(mean_squared_error(y_train[:i], y_train_predict))y_test_predict = lin_reg.predict(X_test)test_score.append(mean_squared_error(y_test, y_test_predict))

可视化学习曲线

plt.plot([i for i in range(1, 76)], np.sqrt(train_score), label="train")

plt.plot([i for i in range(1, 76)], np.sqrt(test_score), label="test")

plt.legend()

plt.show()

编写函数

def plot_learning_curve(algo, X_train, X_test, y_train, y_test):train_score = []test_score = []for i in range(1, len(X_train)+1):algo.fit(X_train[:i], y_train[:i])y_train_predict = algo.predict(X_train[:i])train_score.append(mean_squared_error(y_train[:i], y_train_predict))y_test_predict = algo.predict(X_test)test_score.append(mean_squared_error(y_test, y_test_predict))plt.plot([i for i in range(1, len(X_train)+1)], np.sqrt(train_score), label="train")plt.plot([i for i in range(1, len(X_train)+1)], np.sqrt(test_score), label="test")plt.legend()plt.axis([0, len(X_train)+1, 0, 4])plt.show()plot_learning_curve(LinearRegression(), X_train, X_test, y_train, y_test)

多项式回归

from sklearn.preprocessing import PolynomialFeatures

from sklearn.preprocessing import StandardScaler

from sklearn.pipeline import Pipelinedef PolynomialRegression(degree):return Pipeline([("poly", PolynomialFeatures(degree=degree)),("std_scaler", StandardScaler()),("lin_reg", LinearRegression())])poly2_reg = PolynomialRegression(degree=2)

plot_learning_curve(poly2_reg, X_train, X_test, y_train, y_test)

poly20_reg = PolynomialRegression(degree=20)

plot_learning_curve(poly20_reg, X_train, X_test, y_train, y_test)

测试数据集的意义

问题:针对特定测试数据集过拟合

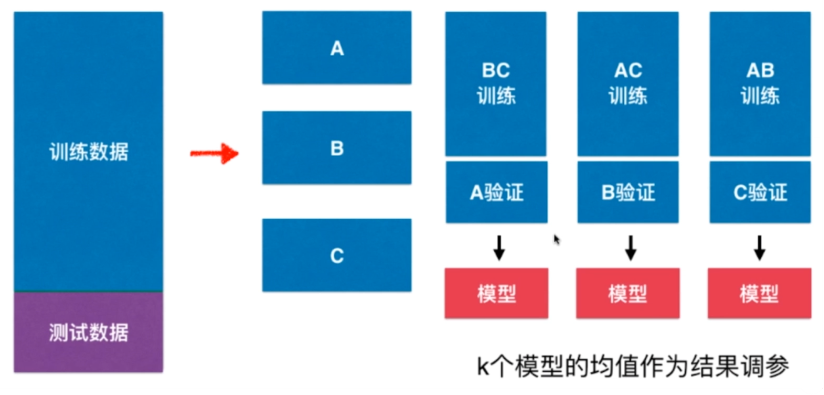

交叉验证 Cross Validation

代码

加载数据

import numpy as np

from sklearn import datasets

digits = datasets.load_digits()

X = digits.data

y = digits.target

分数据

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.4, random_state=666)

找超参

from sklearn.neighbors import KNeighborsClassifierbest_k, best_p, best_score = 0, 0, 0

for k in range(2, 11):for p in range(1, 6):knn_clf = KNeighborsClassifier(weights="distance", n_neighbors=k, p=p)knn_clf.fit(X_train, y_train)score = knn_clf.score(X_test, y_test)if score > best_score:best_k, best_p, best_score = k, p, scoreprint("Best K =", best_k)

print("Best P =", best_p)

print("Best Score =", best_score)

使用交叉验证

from sklearn.model_selection import cross_val_scoreknn_clf = KNeighborsClassifier()

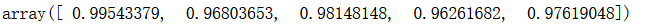

cross_val_score(knn_clf, X_train, y_train)

找超参

best_k, best_p, best_score = 0, 0, 0

for k in range(2, 11):for p in range(1, 6):knn_clf = KNeighborsClassifier(weights="distance", n_neighbors=k, p=p)scores = cross_val_score(knn_clf, X_train, y_train)score = np.mean(scores)if score > best_score:best_k, best_p, best_score = k, p, scoreprint("Best K =", best_k)

print("Best P =", best_p)

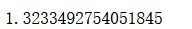

print("Best Score =", best_score)

测试

best_knn_clf = KNeighborsClassifier(weights="distance", n_neighbors=2, p=2)

best_knn_clf.fit(X_train, y_train)

best_knn_clf.score(X_test, y_test)

网格搜索

from sklearn.model_selection import GridSearchCVparam_grid = [{'weights': ['distance'],'n_neighbors': [i for i in range(2, 11)], 'p': [i for i in range(1, 6)]}

]grid_search = GridSearchCV(knn_clf, param_grid, verbose=1)

grid_search.fit(X_train, y_train)

cv参数

数据集分成5份

cross_val_score(knn_clf, X_train, y_train, cv=5)

grid_search = GridSearchCV(knn_clf, param_grid, verbose=1, cv=5)

缺点,每次训练k个模型,相当于整体性能慢了k倍

偏差方差平衡

模型误差=偏差(Bias)+方差(Variance)+不可避免的误差

导致偏差的主要原因:

对问题本身的假设不正确!

如:非线性数据使用线性回归

方差(Variance)

数据的一点点扰动都会较大地影响模型。

通常原因,使用的模型太复杂。

如:高阶多项式回归

偏差和方差

有一些算法天生是高方差的算法。如kNN。

非参数学习通常都是高方差算法。因为不对数据进行任何假设

有一些算法天生是高偏差算法。如线性回归。

参数学习通常都是高偏差算法。因为堆数据具有极强的假设

大多数算法具有相应的参数,可以调整偏差和方差

如kNN中的k。

如线性回归中使用多项式回归。

偏差和方差通常是矛盾的。

降低偏差,会提高方差。

降低方差,会提高偏差。

方差

机器学习的主要挑战,来自于方差!

解决高方差的通常手段:

- 降低模型复杂度

- 减少数据维度;降噪、

- 增加样本数

- 使用验证集

- 模型正则化

模型正则化 Regularization

模型正则化:限制参数的大小

岭回归 Ridge Regression

生成数据

import numpy as np

import matplotlib.pyplot as plt

np.random.seed(42)

x = np.random.uniform(-3.0, 3.0, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x + 3 + np.random.normal(0, 1, size=100)

plt.scatter(x, y)

plt.show()

多项式回归

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import PolynomialFeatures

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LinearRegressiondef PolynomialRegression(degree):return Pipeline([("poly", PolynomialFeatures(degree=degree)),("std_scaler", StandardScaler()),("lin_reg", LinearRegression())])from sklearn.model_selection import train_test_splitnp.random.seed(666)

X_train, X_test, y_train, y_test = train_test_split(X, y)

from sklearn.metrics import mean_squared_errorpoly_reg = PolynomialRegression(degree=20)

poly_reg.fit(X_train, y_train)y_poly_predict = poly_reg.predict(X_test)

mean_squared_error(y_test, y_poly_predict)

X_plot = np.linspace(-3, 3, 100).reshape(100, 1)

y_plot = poly_reg.predict(X_plot)plt.scatter(x, y)

plt.plot(X_plot[:,0], y_plot, color='r')

plt.axis([-3, 3, 0, 6])

plt.show()

封装方法

def plot_model(model):X_plot = np.linspace(-3, 3, 100).reshape(100, 1)y_plot = model.predict(X_plot)plt.scatter(x, y)plt.plot(X_plot[:,0], y_plot, color='r')plt.axis([-3, 3, 0, 6])plt.show()plot_model(poly_reg)

使用岭回归

from sklearn.linear_model import Ridgedef RidgeRegression(degree, alpha):return Pipeline([("poly", PolynomialFeatures(degree=degree)),("std_scaler", StandardScaler()),("ridge_reg", Ridge(alpha=alpha))])

ridge1_reg = RidgeRegression(20, 0.0001)

ridge1_reg.fit(X_train, y_train)y1_predict = ridge1_reg.predict(X_test)

mean_squared_error(y_test, y1_predict)

plot_model(ridge1_reg)

提高alpha

ridge2_reg = RidgeRegression(20, 1)

ridge2_reg.fit(X_train, y_train)y2_predict = ridge2_reg.predict(X_test)

mean_squared_error(y_test, y2_predict)

plot_model(ridge2_reg)

ridge3_reg = RidgeRegression(20, 100)

ridge3_reg.fit(X_train, y_train)y3_predict = ridge3_reg.predict(X_test)

mean_squared_error(y_test, y3_predict)

plot_model(ridge3_reg)

LASSO

生成数据

import numpy as np

import matplotlib.pyplot as plt

np.random.seed(42)

x = np.random.uniform(-3.0, 3.0, size=100)

X = x.reshape(-1, 1)

y = 0.5 * x + 3 + np.random.normal(0, 1, size=100)

plt.scatter(x, y)

plt.show()

分数据

from sklearn.model_selection import train_test_splitnp.random.seed(666)

X_train, X_test, y_train, y_test = train_test_split(X, y)

多项式回归

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import PolynomialFeatures

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LinearRegressiondef PolynomialRegression(degree):return Pipeline([("poly", PolynomialFeatures(degree=degree)),("std_scaler", StandardScaler()),("lin_reg", LinearRegression())])

from sklearn.metrics import mean_squared_errorpoly_reg = PolynomialRegression(degree=20)

poly_reg.fit(X_train, y_train)y_predict = poly_reg.predict(X_test)

mean_squared_error(y_test, y_predict)

可视化

def plot_model(model):X_plot = np.linspace(-3, 3, 100).reshape(100, 1)y_plot = model.predict(X_plot)plt.scatter(x, y)plt.plot(X_plot[:,0], y_plot, color='r')plt.axis([-3, 3, 0, 6])plt.show()plot_model(poly_reg)

Lasso

from sklearn.linear_model import Lassodef LassoRegression(degree, alpha):return Pipeline([("poly", PolynomialFeatures(degree=degree)),("std_scaler", StandardScaler()),("lasso_reg", Lasso(alpha=alpha))])lasso1_reg = LassoRegression(20, 0.01)

lasso1_reg.fit(X_train, y_train)y1_predict = lasso1_reg.predict(X_test)

mean_squared_error(y_test, y1_predict)

plot_model(lasso1_reg)

增大alpha

lasso2_reg = LassoRegression(20, 0.1)

lasso2_reg.fit(X_train, y_train)y2_predict = lasso2_reg.predict(X_test)

mean_squared_error(y_test, y2_predict)

plot_model(lasso2_reg)

lasso3_reg = LassoRegression(20, 1)

lasso3_reg.fit(X_train, y_train)y3_predict = lasso3_reg.predict(X_test)

mean_squared_error(y_test, y3_predict)

plot_model(lasso3_reg)

比较Ridge和LASSO

L1正则 L2正则

L0正则

弹性网 Elastic Net