编解码层

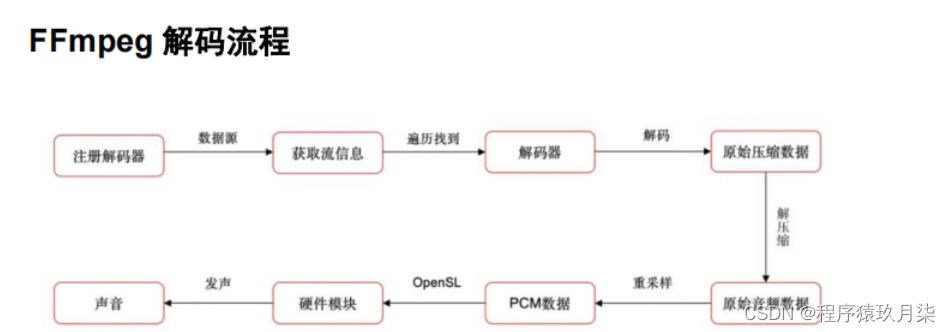

1.解码

(1) 注册所有容器格式和 CODEC:av_register_all()

(2) 打开文件:av_open_input_file()

(3) 从文件中提取流信息:av_find_stream_info()

(4) 穷举所有的流,查找其中种类为 CODEC_TYPE_VIDEO

(5) 查找对应的解码器:avcodec_find_decoder()

(6) 打开编解码器:avcodec_open()

(7) 为解码帧分配内存:avcodec_alloc_frame()

(8) 不停地从码流中提取出帧数据:av_read_frame()

(9) 判断帧的类型,对于视频帧调用:avcodec_decode_video()

(10) 解码完后,释放解码器:avcodec_close()

(11) 关闭输入文件:av_close_input_file()

/*** @file* Demuxing and decoding example.** Show how to use the libavformat and libavcodec API to demux and* decode audio and video data.* @example demuxing_decoding.c*/#include <libavutil/imgutils.h>

#include <libavutil/samplefmt.h>

#include <libavutil/timestamp.h>

#include <libavformat/avformat.h>static AVFormatContext *fmt_ctx = NULL;

static AVCodecContext *video_dec_ctx = NULL, *audio_dec_ctx;

static int width, height;

static enum AVPixelFormat pix_fmt;

static AVStream *video_stream = NULL, *audio_stream = NULL;

static const char *src_filename = NULL;

static const char *video_dst_filename = NULL;

static const char *audio_dst_filename = NULL;

static FILE *video_dst_file = NULL;

static FILE *audio_dst_file = NULL;static uint8_t *video_dst_data[4] = {NULL};

static int video_dst_linesize[4];

static int video_dst_bufsize;static int video_stream_idx = -1, audio_stream_idx = -1;

static AVFrame *frame = NULL;

static AVPacket pkt;

static int video_frame_count = 0;

static int audio_frame_count = 0;static int output_video_frame(AVFrame *frame)

{if (frame->width != width || frame->height != height ||frame->format != pix_fmt) {/* To handle this change, one could call av_image_alloc again and* decode the following frames into another rawvideo file. */fprintf(stderr, "Error: Width, height and pixel format have to be ""constant in a rawvideo file, but the width, height or ""pixel format of the input video changed:\n""old: width = %d, height = %d, format = %s\n""new: width = %d, height = %d, format = %s\n",width, height, av_get_pix_fmt_name(pix_fmt),frame->width, frame->height,av_get_pix_fmt_name(frame->format));return -1;}printf("video_frame n:%d coded_n:%d\n",video_frame_count++, frame->coded_picture_number);/* copy decoded frame to destination buffer:* this is required since rawvideo expects non aligned data */av_image_copy(video_dst_data, video_dst_linesize,(const uint8_t **)(frame->data), frame->linesize,pix_fmt, width, height);/* write to rawvideo file */fwrite(video_dst_data[0], 1, video_dst_bufsize, video_dst_file);return 0;

}static int output_audio_frame(AVFrame *frame)

{size_t unpadded_linesize = frame->nb_samples * av_get_bytes_per_sample(frame->format);printf("audio_frame n:%d nb_samples:%d pts:%s\n",audio_frame_count++, frame->nb_samples,av_ts2timestr(frame->pts, &audio_dec_ctx->time_base));/* Write the raw audio data samples of the first plane. This works* fine for packed formats (e.g. AV_SAMPLE_FMT_S16). However,* most audio decoders output planar audio, which uses a separate* plane of audio samples for each channel (e.g. AV_SAMPLE_FMT_S16P).* In other words, this code will write only the first audio channel* in these cases.* You should use libswresample or libavfilter to convert the frame* to packed data. */fwrite(frame->extended_data[0], 1, unpadded_linesize, audio_dst_file);return 0;

}static int decode_packet(AVCodecContext *dec, const AVPacket *pkt)

{int ret = 0;// submit the packet to the decoderret = avcodec_send_packet(dec, pkt);if (ret < 0) {fprintf(stderr, "Error submitting a packet for decoding (%s)\n", av_err2str(ret));return ret;}// get all the available frames from the decoderwhile (ret >= 0) {ret = avcodec_receive_frame(dec, frame);if (ret < 0) {// those two return values are special and mean there is no output// frame available, but there were no errors during decodingif (ret == AVERROR_EOF || ret == AVERROR(EAGAIN))return 0;fprintf(stderr, "Error during decoding (%s)\n", av_err2str(ret));return ret;}// write the frame data to output fileif (dec->codec->type == AVMEDIA_TYPE_VIDEO)ret = output_video_frame(frame);elseret = output_audio_frame(frame);av_frame_unref(frame);if (ret < 0)return ret;}return 0;

}static int open_codec_context(int *stream_idx,AVCodecContext **dec_ctx, AVFormatContext *fmt_ctx, enum AVMediaType type)

{int ret, stream_index;AVStream *st;AVCodec *dec = NULL;AVDictionary *opts = NULL;ret = av_find_best_stream(fmt_ctx, type, -1, -1, NULL, 0);if (ret < 0) {fprintf(stderr, "Could not find %s stream in input file '%s'\n",av_get_media_type_string(type), src_filename);return ret;} else {stream_index = ret;st = fmt_ctx->streams[stream_index];/* find decoder for the stream */dec = avcodec_find_decoder(st->codecpar->codec_id);if (!dec) {fprintf(stderr, "Failed to find %s codec\n",av_get_media_type_string(type));return AVERROR(EINVAL);}/* Allocate a codec context for the decoder */*dec_ctx = avcodec_alloc_context3(dec);if (!*dec_ctx) {fprintf(stderr, "Failed to allocate the %s codec context\n",av_get_media_type_string(type));return AVERROR(ENOMEM);}/* Copy codec parameters from input stream to output codec context */if ((ret = avcodec_parameters_to_context(*dec_ctx, st->codecpar)) < 0) {fprintf(stderr, "Failed to copy %s codec parameters to decoder context\n",av_get_media_type_string(type));return ret;}/* Init the decoders */if ((ret = avcodec_open2(*dec_ctx, dec, &opts)) < 0) {fprintf(stderr, "Failed to open %s codec\n",av_get_media_type_string(type));return ret;}*stream_idx = stream_index;}return 0;

}static int get_format_from_sample_fmt(const char **fmt,enum AVSampleFormat sample_fmt)

{int i;struct sample_fmt_entry {enum AVSampleFormat sample_fmt; const char *fmt_be, *fmt_le;} sample_fmt_entries[] = {{ AV_SAMPLE_FMT_U8, "u8", "u8" },{ AV_SAMPLE_FMT_S16, "s16be", "s16le" },{ AV_SAMPLE_FMT_S32, "s32be", "s32le" },{ AV_SAMPLE_FMT_FLT, "f32be", "f32le" },{ AV_SAMPLE_FMT_DBL, "f64be", "f64le" },};*fmt = NULL;for (i = 0; i < FF_ARRAY_ELEMS(sample_fmt_entries); i++) {struct sample_fmt_entry *entry = &sample_fmt_entries[i];if (sample_fmt == entry->sample_fmt) {*fmt = AV_NE(entry->fmt_be, entry->fmt_le);return 0;}}fprintf(stderr,"sample format %s is not supported as output format\n",av_get_sample_fmt_name(sample_fmt));return -1;

}int main (int argc, char **argv)

{int ret = 0;if (argc != 4) {fprintf(stderr, "usage: %s input_file video_output_file audio_output_file\n""API example program to show how to read frames from an input file.\n""This program reads frames from a file, decodes them, and writes decoded\n""video frames to a rawvideo file named video_output_file, and decoded\n""audio frames to a rawaudio file named audio_output_file.\n",argv[0]);exit(1);}src_filename = argv[1];video_dst_filename = argv[2];audio_dst_filename = argv[3];/* open input file, and allocate format context */if (avformat_open_input(&fmt_ctx, src_filename, NULL, NULL) < 0) {fprintf(stderr, "Could not open source file %s\n", src_filename);exit(1);}/* retrieve stream information */if (avformat_find_stream_info(fmt_ctx, NULL) < 0) {fprintf(stderr, "Could not find stream information\n");exit(1);}if (open_codec_context(&video_stream_idx, &video_dec_ctx, fmt_ctx, AVMEDIA_TYPE_VIDEO) >= 0) {video_stream = fmt_ctx->streams[video_stream_idx];video_dst_file = fopen(video_dst_filename, "wb");if (!video_dst_file) {fprintf(stderr, "Could not open destination file %s\n", video_dst_filename);ret = 1;goto end;}/* allocate image where the decoded image will be put */width = video_dec_ctx->width;height = video_dec_ctx->height;pix_fmt = video_dec_ctx->pix_fmt;ret = av_image_alloc(video_dst_data, video_dst_linesize,width, height, pix_fmt, 1);if (ret < 0) {fprintf(stderr, "Could not allocate raw video buffer\n");goto end;}video_dst_bufsize = ret;}if (open_codec_context(&audio_stream_idx, &audio_dec_ctx, fmt_ctx, AVMEDIA_TYPE_AUDIO) >= 0) {audio_stream = fmt_ctx->streams[audio_stream_idx];audio_dst_file = fopen(audio_dst_filename, "wb");if (!audio_dst_file) {fprintf(stderr, "Could not open destination file %s\n", audio_dst_filename);ret = 1;goto end;}}/* dump input information to stderr */av_dump_format(fmt_ctx, 0, src_filename, 0);if (!audio_stream && !video_stream) {fprintf(stderr, "Could not find audio or video stream in the input, aborting\n");ret = 1;goto end;}frame = av_frame_alloc();if (!frame) {fprintf(stderr, "Could not allocate frame\n");ret = AVERROR(ENOMEM);goto end;}/* initialize packet, set data to NULL, let the demuxer fill it */av_init_packet(&pkt);pkt.data = NULL;pkt.size = 0;if (video_stream)printf("Demuxing video from file '%s' into '%s'\n", src_filename, video_dst_filename);if (audio_stream)printf("Demuxing audio from file '%s' into '%s'\n", src_filename, audio_dst_filename);/* read frames from the file */while (av_read_frame(fmt_ctx, &pkt) >= 0) {// check if the packet belongs to a stream we are interested in, otherwise// skip itif (pkt.stream_index == video_stream_idx)ret = decode_packet(video_dec_ctx, &pkt);else if (pkt.stream_index == audio_stream_idx)ret = decode_packet(audio_dec_ctx, &pkt);av_packet_unref(&pkt);if (ret < 0)break;}/* flush the decoders */if (video_dec_ctx)decode_packet(video_dec_ctx, NULL);if (audio_dec_ctx)decode_packet(audio_dec_ctx, NULL);printf("Demuxing succeeded.\n");if (video_stream) {printf("Play the output video file with the command:\n""ffplay -f rawvideo -pix_fmt %s -video_size %dx%d %s\n",av_get_pix_fmt_name(pix_fmt), width, height,video_dst_filename);}if (audio_stream) {enum AVSampleFormat sfmt = audio_dec_ctx->sample_fmt;int n_channels = audio_dec_ctx->channels;const char *fmt;if (av_sample_fmt_is_planar(sfmt)) {const char *packed = av_get_sample_fmt_name(sfmt);printf("Warning: the sample format the decoder produced is planar ""(%s). This example will output the first channel only.\n",packed ? packed : "?");sfmt = av_get_packed_sample_fmt(sfmt);n_channels = 1;}if ((ret = get_format_from_sample_fmt(&fmt, sfmt)) < 0)goto end;printf("Play the output audio file with the command:\n""ffplay -f %s -ac %d -ar %d %s\n",fmt, n_channels, audio_dec_ctx->sample_rate,audio_dst_filename);}end:avcodec_free_context(&video_dec_ctx);avcodec_free_context(&audio_dec_ctx);avformat_close_input(&fmt_ctx);if (video_dst_file)fclose(video_dst_file);if (audio_dst_file)fclose(audio_dst_file);av_frame_free(&frame);av_free(video_dst_data[0]);return ret < 0;

}

2.编码

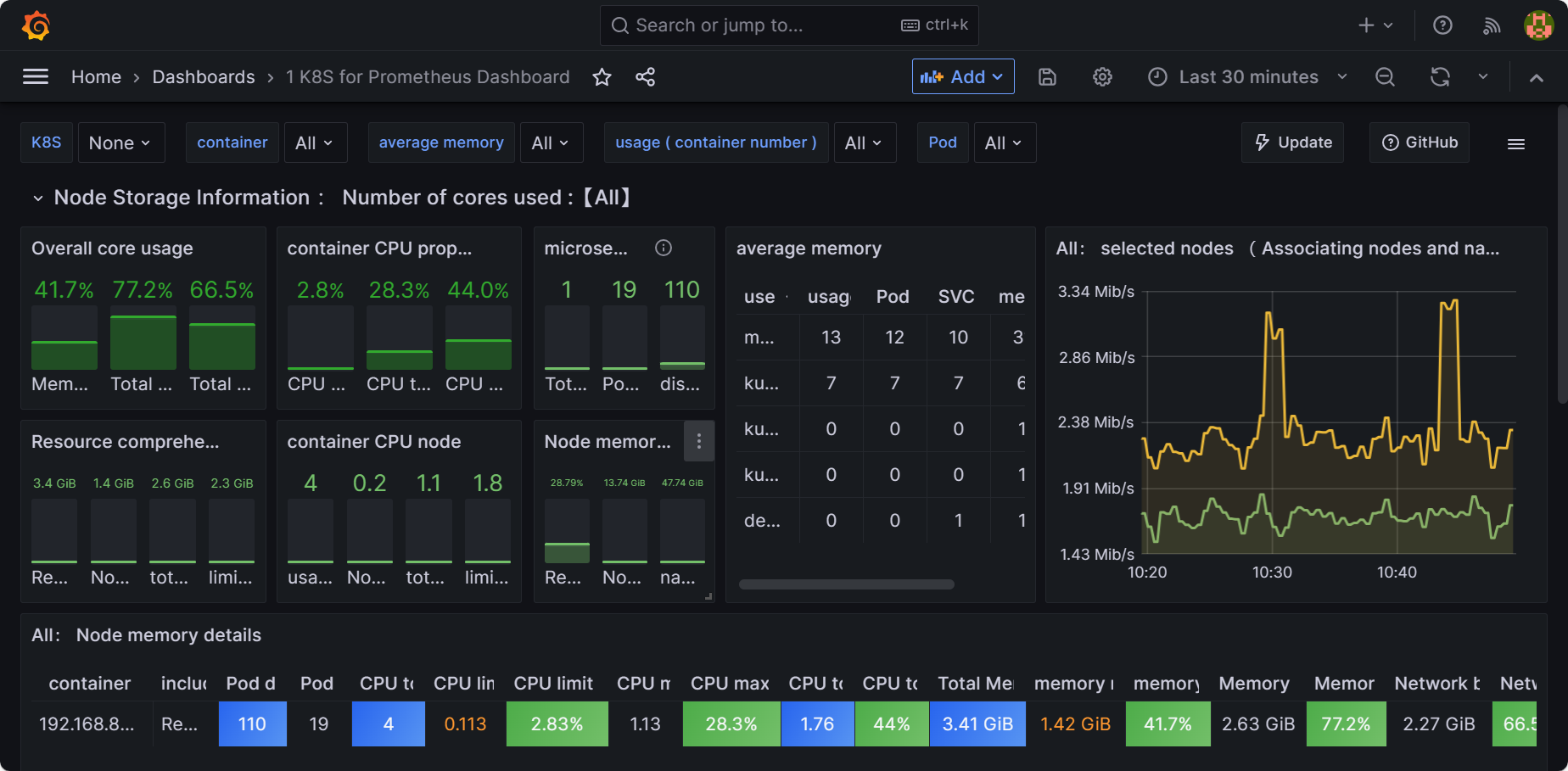

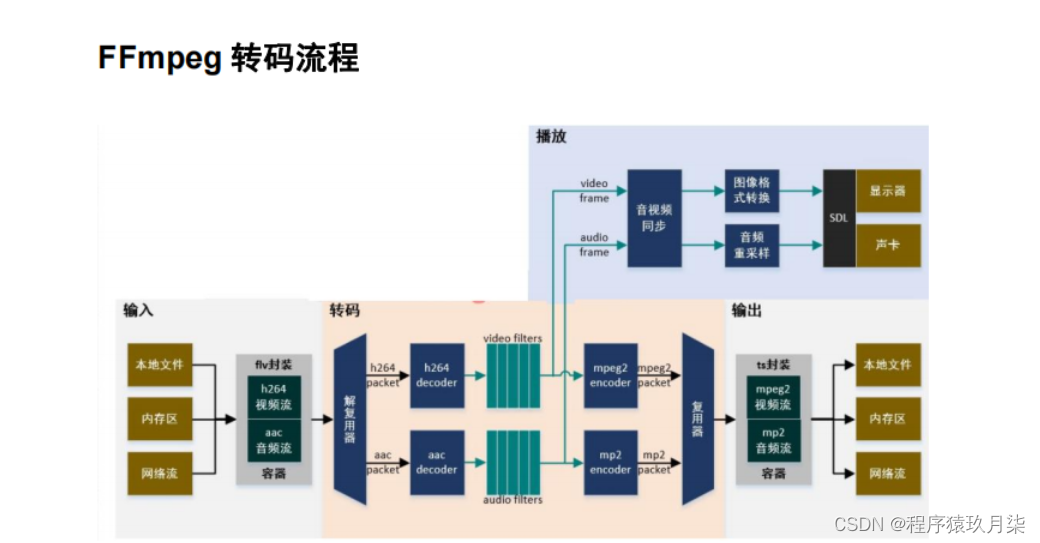

大流程可以划分为输入、输出、转码、播放四大块。

其中转码涉及比较多的处理环节,从图中可以看出,转码功能在整个功能图中占比很大。转码的核心功能在解码和编码两个部分,但在一个可用的示例程序中,编码解码与输入输出是难以分割的。

解复用器为解码器提供输入,解码器会输出原始帧,对原始帧可进行各种复杂的滤镜处理,滤镜处理后的帧经编码器生成编码帧,多路流的编码帧经复用器输出到输出文件。

/*** @file* video encoding with libavcodec API example** @example encode_video.c*/#include <stdio.h>

#include <stdlib.h>

#include <string.h>#include <libavcodec/avcodec.h>#include <libavutil/opt.h>

#include <libavutil/imgutils.h>static void encode(AVCodecContext *enc_ctx, AVFrame *frame, AVPacket *pkt,FILE *outfile)

{int ret;/* send the frame to the encoder */if (frame)printf("Send frame %3"PRId64"\n", frame->pts);ret = avcodec_send_frame(enc_ctx, frame);if (ret < 0) {fprintf(stderr, "Error sending a frame for encoding\n");exit(1);}while (ret >= 0) {ret = avcodec_receive_packet(enc_ctx, pkt);if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)return;else if (ret < 0) {fprintf(stderr, "Error during encoding\n");exit(1);}printf("Write packet %3"PRId64" (size=%5d)\n", pkt->pts, pkt->size);fwrite(pkt->data, 1, pkt->size, outfile);av_packet_unref(pkt);}

}int mainsse22(int argc, char **argv)

{const char *filename, *codec_name;const AVCodec *codec;AVCodecContext *c= NULL;int i, ret, x, y;FILE *f;AVFrame *frame;AVPacket *pkt;uint8_t endcode[] = { 0, 0, 1, 0xb7 };if (argc <= 2) {fprintf(stderr, "Usage: %s <output file> <codec name>\n", argv[0]);exit(0);}filename = argv[1];codec_name = argv[2];/* find the mpeg1video encoder */codec = avcodec_find_encoder_by_name(codec_name);if (!codec) {fprintf(stderr, "Codec '%s' not found\n", codec_name);exit(1);}c = avcodec_alloc_context3(codec);if (!c) {fprintf(stderr, "Could not allocate video codec context\n");exit(1);}pkt = av_packet_alloc();if (!pkt)exit(1);/* put sample parameters */c->bit_rate = 400000;/* resolution must be a multiple of two */c->width = 352;c->height = 288;/* frames per second */c->time_base = (AVRational){1, 25};c->framerate = (AVRational){25, 1};/* emit one intra frame every ten frames* check frame pict_type before passing frame* to encoder, if frame->pict_type is AV_PICTURE_TYPE_I* then gop_size is ignored and the output of encoder* will always be I frame irrespective to gop_size*/c->gop_size = 10;c->max_b_frames = 1;c->pix_fmt = AV_PIX_FMT_YUV420P;if (codec->id == AV_CODEC_ID_H264)av_opt_set(c->priv_data, "preset", "slow", 0);/* open it */ret = avcodec_open2(c, codec, NULL);if (ret < 0) {fprintf(stderr, "Could not open codec: %s\n", av_err2str(ret));exit(1);}f = fopen(filename, "wb");if (!f) {fprintf(stderr, "Could not open %s\n", filename);exit(1);}frame = av_frame_alloc();if (!frame) {fprintf(stderr, "Could not allocate video frame\n");exit(1);}frame->format = c->pix_fmt;frame->width = c->width;frame->height = c->height;ret = av_frame_get_buffer(frame, 0);if (ret < 0) {fprintf(stderr, "Could not allocate the video frame data\n");exit(1);}/* encode 1 second of video */for (i = 0; i < 25; i++) {fflush(stdout);/* make sure the frame data is writable */ret = av_frame_make_writable(frame);if (ret < 0)exit(1);/* prepare a dummy image *//* Y */for (y = 0; y < c->height; y++) {for (x = 0; x < c->width; x++) {frame->data[0][y * frame->linesize[0] + x] = x + y + i * 3;}}/* Cb and Cr */for (y = 0; y < c->height/2; y++) {for (x = 0; x < c->width/2; x++) {frame->data[1][y * frame->linesize[1] + x] = 128 + y + i * 2;frame->data[2][y * frame->linesize[2] + x] = 64 + x + i * 5;}}frame->pts = i;/* encode the image */encode(c, frame, pkt, f);}/* flush the encoder */encode(c, NULL, pkt, f);/* add sequence end code to have a real MPEG file */if (codec->id == AV_CODEC_ID_MPEG1VIDEO || codec->id == AV_CODEC_ID_MPEG2VIDEO)fwrite(endcode, 1, sizeof(endcode), f);fclose(f);avcodec_free_context(&c);av_frame_free(&frame);av_packet_free(&pkt);return 0;

}